I tried every solution I could find, but had no luck. How can you tell what the LoRA is actually doing? Change to (deactivating the LoRA completely), and then regenerate. A basic Web interface that allows you to run a local web server for generating images in … Toggle navigation. UPDATE: 29 Sept – Some people have shared that using ‘pip install protobuf=3. invokeai that resides at the root of your InvokeAI directory. First, your text prompt gets projected into a latent vector space by the Training. I'm searching to optimize my build for my laptop and I'm not sure where I should put the arguments flags in the start up file Or if the start up file I'm looking for really is "launch. They are the product of training the AI on millions of captioned images gathered from multiple sources. py", line 31, in run_sync Code to add Command line argument to Auto1111 google colab notebook. Then Stable Diffusion takes those description prompts and up-draws the image via img2img. One of the first questions many people have about Stable Diffusion is the license this model is published under and whether the generated art is free to use for personal and commercial projects. arguments value a lot nicer to work with (can choice values of The following presents additional parameters you can try: python main. What to do after: To create a public link, set `share=True` in `launch Here the list of videos to with the order to follow All videos are very beginner friendly - not skipping any parts and covering pretty much everything Playlist link on YouTube: Stable Diffusion Add -no-half to your command line arguments and see if that helps.

I was wanting to add command line arguments to the WubUI on the TheLastBEn's Fast Stable Diffusion notebook for google colab so I can use my own google drive folders for my checkpoint and lora files like I do on other notebooks such as NoCrypt's Colab Remastered. need to change port from default 3000 to something else (e. sh -xformers otherwise it won't launch with that argument. support for stable-diffusion-2-1-unclip checkpoints that are used for generating image variations. Models are the "database" and "brain" of the AI. Afterwards whenever you want to run Stable Diffusion you will need to run this. It's a great tool for anyone looking to learn and explore the possibilities of stable diffusion. The command prompt will give you a URL like this. Looks like it is getting confused about where your GPU is. Launching Web UI with arguments: Traceback (most recent call last): \stable-diffusion-webui-directml-master\stable-diffusion-webui-directml-master\venv\lib\site-packages\torch_directml\device. AUTOMATIC1111 / stable-diffusion-webui Public. Hit the GET /download/ endpoint to download your image. First needs no explanation while api is used for certain extensions. Open the Command Prompt (CMD) and navigate to the directory where you have installed "stable-diffusion-webui". … With the command line version of Stable Diffusion, you can actually use a negative cfg scale value.

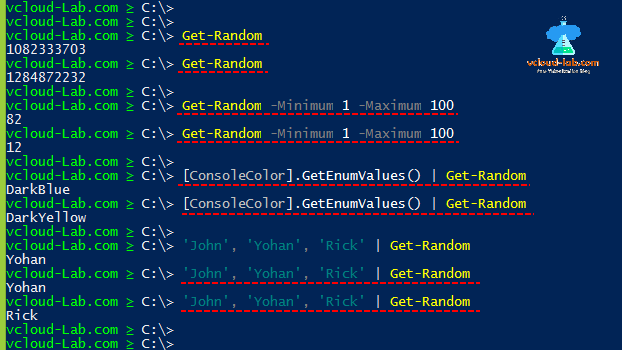

Press the Window key (It should be on the left of the space bar on your keyboard), and a search window should appear. Select GPU to use for your instance on a system with multiple GPUs. Configs are the parameters that will be used to train the inversion. py -p "cat with three eyes - to set prompt. 3:53 Modification of command parameters in webui-user.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed